timestretch – a fast sample time stretching implementation

Overview

When reducing or increasing the playback speed of an audio recording, it's pitch usually changes, leading to an audible "mickey mouse" effect. The SOLA algorithm provides a way to change the speed without altering the pitch.

Details

The basic idea behind the method lies in the inherent redundancy in the periodic waves of spoken words or music. A vowel consists of overlayed and repeating waves where a human ear would not mind a single one of those repetitions being there or not:

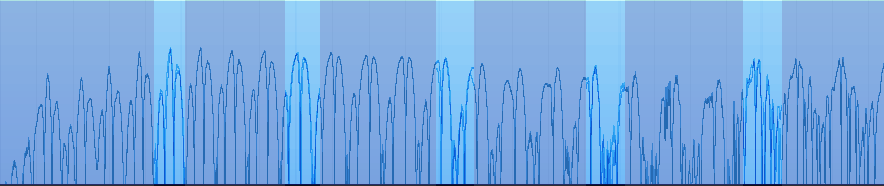

If we want to play a sample faster, we can try to find the period length corresponding to the base frequency of the recording in that place and overlap some periods, cross fading the first snippet one into the other. Or put it more visually intuitive:

Obviously the recording is shorter than before, meaning it can be played in less time. In order to reduce the play time by a fixed ratio, we can alter the length of the overlapping windows (bright blue).

To find the perfect offset to overlay our samples at, we simply brute force the sum of all differences of all samples for each offset, using the mean squared error and selecting the offset where the error is minimal – a process commonly called auto correlation. In our implementation this error is further biased towards the center of our window, so that the algorithm is not forced into selecting some sub optimal (and audibly bad) positions after a while, if the inherent period length the overlapping length differs only slightly.

Build instructions

timestretch is available from my git repository. Use git clone git://erdgeist.org/timestretch to check it out. An timestretch gitweb is available.

Currently there's only one source file containing the setup routine calc_convert_values, which takes sample rate (used to calculate a proper window length in ms, based on heuristic values) and a tempo, which is a floating point value giving the rate in which to slow down or speed up the recording. It fills global variables you might want to put in a context struct for your project. At exit, the value g_input_length is the minimum number of samples expected for later processing, g_output_length is the exact amount of samples produced by each run.

The actual work is done in the process_frame function which takes a pointer to the input and output buffer, the pointer to some scratch space (set up in calc_convert_values in this implementation) and a frame_flag indicating if this is the first frame (where nothing is there to cross fade with), a normal frame and the last frame, where no samples are kept for later cross fading and the caller can continue to resume to non-timestretched playback again.

License

timestretch is considered beer ware.